[ad_1]

The Mannequin Context Protocol (MCP) and Agent-to-Agent (A2A) have gained a big business consideration over the previous yr. MCP first grabbed the world’s consideration in dramatic trend when it was revealed by Anthropic in November 2024, garnering tens of 1000’s of stars on GitHub inside the first month. Organizations rapidly noticed the worth of MCP as a approach to summary APIs into pure language, permitting LLMs to simply interpret and use them as instruments. In April 2025, Google launched A2A, offering a brand new protocol that permits brokers to find one another’s capabilities, enabling the speedy progress and scaling of agentic programs.

Each protocols are aligned with the Linux Basis and are designed for agentic programs, however their adoption curves have differed considerably. MCP has seen speedy adoption, whereas A2A’s progress has been extra of a sluggish burn. This has led to business commentary suggesting that A2A is quietly fading into the background, with many individuals believing that MCP has emerged because the de-facto normal for agentic programs.

How do these two protocols evaluate? Is there actually an epic battle underway between MCP and A2A? Is that this going to be Blu-ray vs. HD-DVD, or VHS vs. Betamax over again? Nicely, not precisely. The fact is that whereas there may be some overlap, they function at completely different ranges of the agentic stack and are each extremely related.

MCP is designed as a approach for LLMs to grasp what exterior instruments can be found to it. Earlier than MCP, these instruments have been uncovered primarily by means of APIs. Nevertheless, uncooked API dealing with by an LLM is clumsy and troublesome to scale. LLMs are designed to function on the earth of pure language, the place they interpret a job and determine the best software able to undertaking it. APIs additionally endure from points associated to standardization and versioning. For instance, if an API undergoes a model replace, how would the LLM find out about it and use it appropriately, particularly when attempting to scale throughout 1000’s of APIs? This rapidly turns into a show-stopper. These have been exactly the issues that MCP was designed to resolve.

Architecturally, MCP works properly—that’s, till a sure level. Because the variety of instruments on an MCP server grows, the software descriptions and manifest despatched to the LLM can change into huge, rapidly consuming the immediate’s complete context window. This impacts even the biggest LLMs, together with these supporting a whole bunch of 1000’s of tokens. At scale, this turns into a basic constraint. Just lately, there have been spectacular strides in decreasing the token depend utilized by MCP servers, however even then, the scalability limits of MCP are more likely to stay.

That is the place A2A is available in. A2A doesn’t function on the degree of instruments or software descriptions, and it doesn’t become involved within the particulars of API abstraction. As an alternative, A2A introduces the idea of Agent Playing cards, that are high-level descriptors that seize the general capabilities of an agent, fairly than explicitly itemizing the instruments or detailed expertise the agent can entry. Moreover, A2A works completely between brokers, that means it doesn’t have the flexibility to work together straight with instruments or finish programs the best way MCP does.

So, which one do you have to use? Which one is healthier? In the end, the reply is each.

If you’re constructing a easy agentic system with a single supervisory agent and quite a lot of instruments it will probably entry, MCP alone will be a great match—so long as the immediate stays compact sufficient to suit inside the LLM’s context window (which incorporates all the immediate funds, together with software schemas, system directions, dialog state, retrieved paperwork, and extra). Nevertheless, in case you are deploying a multi-agent system, you’ll very probably want so as to add A2A into the combination.

Think about a supervisory agent chargeable for dealing with a request equivalent to, “analyze Wi-Fi roaming issues and suggest mitigation methods.” Moderately than exposing each doable software straight, the supervisor makes use of A2A to find specialised brokers—equivalent to an RF evaluation agent, a consumer authentication agent, and a community efficiency agent—based mostly on their high-level Agent Playing cards. As soon as the suitable agent is chosen, that agent can then use MCP to find and invoke the particular instruments it wants. On this stream, A2A supplies scalable agent-level routing, whereas MCP supplies exact, tool-level execution.

The important thing level is that A2A can—and sometimes ought to—be utilized in live performance with MCP. This isn’t an MCP versus A2A determination; it’s an architectural one, the place each protocols will be leveraged because the system grows and evolves.

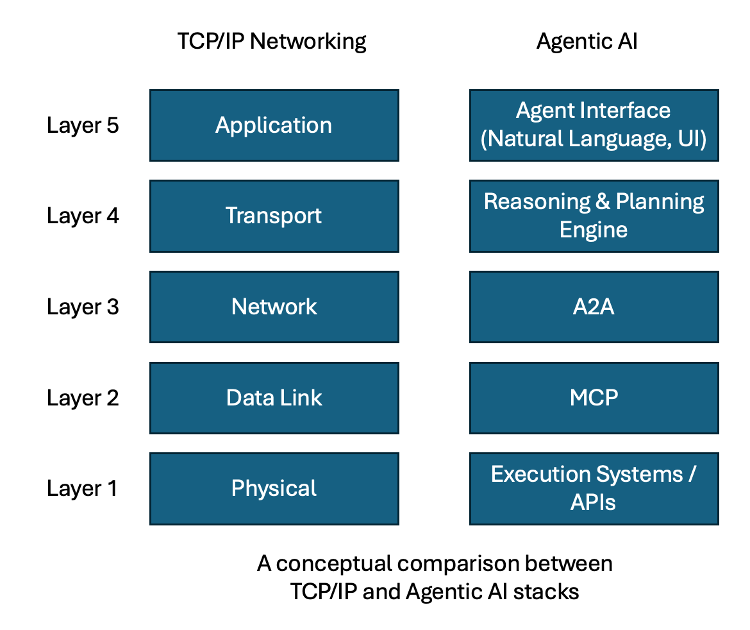

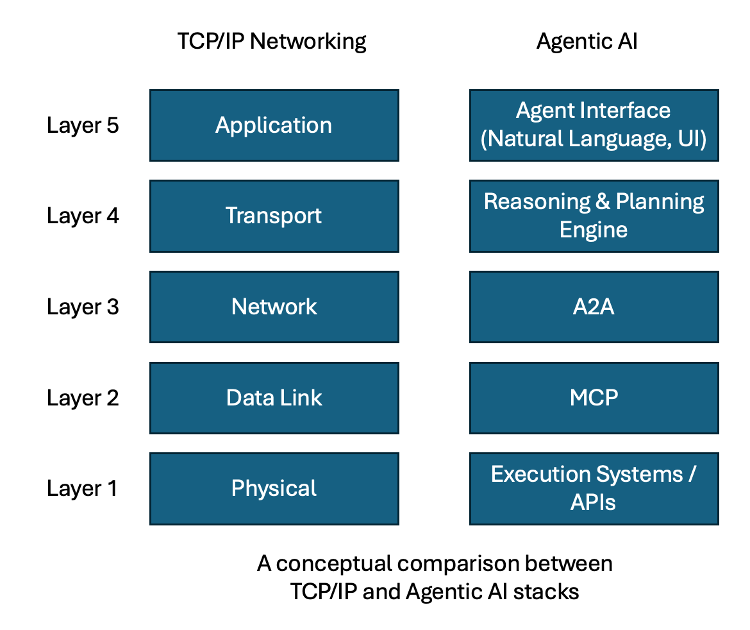

The psychological mannequin I like to make use of comes from the world of networking. Within the early days of pc networking, networks have been small and self-contained, the place a single Layer-2 area (the information hyperlink layer) was adequate. As networks grew and have become interconnected, the bounds of Layer-2 have been rapidly reached, necessitating the introduction of routers and routing protocols—often known as Layer-3 (the community layer). Routers operate as boundaries for Layer-2 networks, permitting them to be interconnected whereas additionally stopping broadcast site visitors from flooding all the system. On the router, networks are described in higher-level, summarized phrases, fairly than exposing all of the underlying element. For a pc to speak outdoors of its instant Layer-2 community, it should first uncover the closest router, figuring out that its meant vacation spot exists someplace past that boundary.

This maps carefully to the connection between MCP and A2A. MCP is analogous to a Layer-2 community: it supplies detailed visibility and direct entry, nevertheless it doesn’t scale indefinitely. A2A is analogous to the Layer-3 routing boundary, which aggregates higher-level details about capabilities and supplies a gateway to the remainder of the agentic community.

The comparability might not be an ideal match, nevertheless it affords an intuitive psychological mannequin that resonates with those that have a networking background. Simply as fashionable networks are constructed on each Layer-2 and Layer-3, agentic AI programs will ultimately require the total stack as properly. On this mild, MCP and A2A shouldn’t be considered competing requirements. In time, they may probably each change into vital layers of the bigger agentic stack as we construct more and more refined AI programs.

The groups that acknowledge this early would be the ones that efficiently scale their agentic programs into sturdy, production-grade architectures.

[ad_2]